03_GriddedDiagnostics_Divergence_Isotachs_ERA5

Overview¶

Select a date and access the ERA5 dataset

Subset the desired variables along their dimensions

Calculate and visualize diagnostic quantities.

Imports¶

import xarray as xr

import pandas as pd

import numpy as np

from datetime import datetime as dt

from metpy.units import units

import metpy.calc as mpcalc

import cartopy.crs as ccrs

import cartopy.feature as cfeature

import matplotlib.pyplot as pltSpecify a starting and ending date/time, regional extent, vertical levels, and access/subset the ERA5¶

# 1. Set the bounds of your map.

# Set the four values in the code cell below. Test the values.

latN = 50

latS = 20

lonW = -100

lonE = -60

if ( latN > latS ) and ( lonE > lonW):

cLat = (latN + latS)/2

cLon = (lonW + lonE)/2

else:

e = ("Northern (eastern) latitude (longitude) must be greater than southern (western). Go back and re-adjust!")

raise ValueError(f"Error in lat/lon specification: {e}")

# Recall that in ERA5, longitudes run between 0 and 360, not -180 and 180

if (lonW < 0 ):

lonW = lonW + 360

if (lonE < 0 ):

lonE = lonE + 360

# 2. Select the map projection for your figure.

proj_map = ccrs.LambertConformal(central_latitude=cLat, central_longitude=cLon)

# 3. Select the projection on which the dataset is based

proj_data = ccrs.PlateCarree()

# 4. Select the resolution of the Cartopy cartographic features, and, if desired, MetPy's county shapefiles.

# Uncomment / comment as desired

#res = '10m' # Most detailed, best for small regions (e.g. NYS)

res = '50m' # Medium detail, best for medium-sized regions (e.g. CONUS)

#res = '110m' # Least detailed, best for large/global maps

#res_county = '5m' # Higher-res MetPy US County shapefiles

res_county = '20m' # Lower-res MetPy US County shapefiles

# Apply the latitude/longitude ranges and extend the data region if desired

expand_lon = 1

expand_lat = 1

latRange = np.arange(latS - expand_lat,latN + expand_lat,.25) # expand the data range a bit beyond the plot range

lonRange = np.arange((lonW - expand_lon),(lonE + expand_lon),.25) # Need to match longitude values to those of the coordinate variable

# Specify desired pressure levels: in this case, a list of one or more

plevel_list = [300]

startYear = 1979

startMonth = 2

startDay = 19

startHour = 12

startMinute = 0

startDateTime = dt(startYear,startMonth,startDay, startHour, startMinute)

endYear = 1979

endMonth = 2

endDay = 19

endHour = 18

endMinute = 0

endDateTime = dt(endYear,endMonth,endDay, endHour, endMinute)

delta_time = endDateTime - startDateTime

time_range_max = 7*86400

if (delta_time.total_seconds() > time_range_max):

raise RuntimeError("Your time range must not exceed 7 days. Go back and try again.")

# Create a list of date and times based on what we specified for the initial and final times,

# using Pandas’ date_range function

dateRange = pd.date_range(startDateTime, endDateTime,freq="6h")Print out the lat/lon, pressure, and date/time arrays

print ("Summary of subsetted dimensions: \n\n")

print (f'Longitude Range: {lonRange} \n')

print (f'Latitude Range: {latRange} \n')

print (f'Pressure levels: {plevel_list} \n')

print(f'Date Range: {dateRange}')Summary of subsetted dimensions:

Longitude Range: [259. 259.25 259.5 259.75 260. 260.25 260.5 260.75 261. 261.25

261.5 261.75 262. 262.25 262.5 262.75 263. 263.25 263.5 263.75

264. 264.25 264.5 264.75 265. 265.25 265.5 265.75 266. 266.25

266.5 266.75 267. 267.25 267.5 267.75 268. 268.25 268.5 268.75

269. 269.25 269.5 269.75 270. 270.25 270.5 270.75 271. 271.25

271.5 271.75 272. 272.25 272.5 272.75 273. 273.25 273.5 273.75

274. 274.25 274.5 274.75 275. 275.25 275.5 275.75 276. 276.25

276.5 276.75 277. 277.25 277.5 277.75 278. 278.25 278.5 278.75

279. 279.25 279.5 279.75 280. 280.25 280.5 280.75 281. 281.25

281.5 281.75 282. 282.25 282.5 282.75 283. 283.25 283.5 283.75

284. 284.25 284.5 284.75 285. 285.25 285.5 285.75 286. 286.25

286.5 286.75 287. 287.25 287.5 287.75 288. 288.25 288.5 288.75

289. 289.25 289.5 289.75 290. 290.25 290.5 290.75 291. 291.25

291.5 291.75 292. 292.25 292.5 292.75 293. 293.25 293.5 293.75

294. 294.25 294.5 294.75 295. 295.25 295.5 295.75 296. 296.25

296.5 296.75 297. 297.25 297.5 297.75 298. 298.25 298.5 298.75

299. 299.25 299.5 299.75 300. 300.25 300.5 300.75]

Latitude Range: [19. 19.25 19.5 19.75 20. 20.25 20.5 20.75 21. 21.25 21.5 21.75

22. 22.25 22.5 22.75 23. 23.25 23.5 23.75 24. 24.25 24.5 24.75

25. 25.25 25.5 25.75 26. 26.25 26.5 26.75 27. 27.25 27.5 27.75

28. 28.25 28.5 28.75 29. 29.25 29.5 29.75 30. 30.25 30.5 30.75

31. 31.25 31.5 31.75 32. 32.25 32.5 32.75 33. 33.25 33.5 33.75

34. 34.25 34.5 34.75 35. 35.25 35.5 35.75 36. 36.25 36.5 36.75

37. 37.25 37.5 37.75 38. 38.25 38.5 38.75 39. 39.25 39.5 39.75

40. 40.25 40.5 40.75 41. 41.25 41.5 41.75 42. 42.25 42.5 42.75

43. 43.25 43.5 43.75 44. 44.25 44.5 44.75 45. 45.25 45.5 45.75

46. 46.25 46.5 46.75 47. 47.25 47.5 47.75 48. 48.25 48.5 48.75

49. 49.25 49.5 49.75 50. 50.25 50.5 50.75]

Pressure levels: [300]

Date Range: DatetimeIndex(['1979-02-19 12:00:00', '1979-02-19 18:00:00'], dtype='datetime64[ns]', freq='6h')

Access either the cloud-served or local ERA5 repository. Then subset it according to your choices above.¶

endDate = dt(2023,1,10)

if (endDateTime <= endDate): # Use WeatherBench archive

cloud_source = True

ds = xr.open_dataset(

'gs://weatherbench2/datasets/era5/1959-2023_01_10-wb13-6h-1440x721.zarr',

chunks={'time': 48},

consolidated=True,

engine='zarr'

)

# Attach units to the pressure coordinate

ds.coords['level'].attrs['units'] = 'hPa'

# Rename the variable names in this dataset so they use their corresponding short_name attributes

# Construct a dictionary whose keys are the original variable names, and whose values are their

# short names

rename_data_vars = {}

seen_short_names = set()

for var_name in ds.data_vars:

short = ds[var_name].attrs.get("short_name")

# Only rename if:

# 1. short_name exists

# 2. We haven't already used it

if short and short not in seen_short_names:

rename_data_vars[var_name] = short

seen_short_names.add(short)

# Apply renaming

ds = ds.rename(rename_data_vars)

else: # Use local archive

import glob, os

cloud_source = False

input_directory = '/free/ktyle/era5'

files = glob.glob(os.path.join(input_directory,'*_era5.nc'))

ds = xr.open_mfdataset(files,coords='minimal',compat='override')

# Rename two of the coordinate variables so they match what is in the WeatherBench archive

ds = ds.rename({'valid_time': 'time', 'pressure_level': 'level'})

# Perform the initial subset

ds = ds.sel(time = dateRange, longitude = lonRange, latitude = latRange, level = plevel_list)Chunks: See this link for more info about chunking in Xarray.

Examine the subsetted Dataset

dsLoading...

Now create DataArray objects for the data variables we are interested in. Since we are interested in computing divergence, as well as displaying wind barbs and geopotential heights, what variables might we require?¶

Z = ds['z']

U = ds['u']

V = ds['v']ULoading...

In preparation for calculating diagnostics, let’s first select just a single isobaric surface from the four that we previously listed. Subset the data variables based on that level.

p_level = 300

Zp = Z.sel(level=p_level).compute()

Up = U.sel(level=p_level).compute()

Vp = V.sel(level=p_level).compute()Calculate the windspeed¶

# Calculate windspeed and convert to knots.

WSPDp = mpcalc.wind_speed(Up, Vp)

WSPDkts = WSPDp.metpy.convert_units('kts')We will draw filled contours of isotachs every 25 knots beginning at 100 knots.

wspdInc = 25

wspdContours = np.arange (100, 200, wspdInc)Calculate divergence¶

div = mpcalc.divergence(Up, Vp)Print out the value of divergence at one of the gridpoints. Since it still has units attached, we get a nice sense as to how it scales.¶

div.sel(latitude=40, longitude=285) # remember, ERA5 represents W Hemisphere longitudes from 180 to 360Loading...

Apply the Gaussian smoother on divergence¶

sigma = 7.0 # this depends on how noisy your data is, adjust as necessary

divSmooth = mpcalc.smooth_gaussian(div, sigma)Scale these values up by 1e6 (or 1 * 10**6, or 1,000,000) and find the min/max values. Use these to inform the setting of the contour fill intervals.¶

scale = 1e6

divSmooth = divSmooth * scale

minDiv = divSmooth.min().values

maxDiv = divSmooth.max().values

print (minDiv, maxDiv)-194.1274597961225 250.493192267742

Usually, we wish to avoid plotting the “zero” contour line for diagnostic quantities such as divergence, advection, and frontogenesis. Thus, create two lists of values … one for negative and one for positive.¶

divInc = 20

negDivContours = np.arange (-160, 0, divInc)

posDivContours = np.arange (10, 160, divInc)Convert geopotential to geopotential height and express in decameters.¶

Zdam = mpcalc.geopotential_to_height(Zp).metpy.convert_units('dam')Choose an appropriate contour interval for geopotential heights … a common convention is: from surface up through 700 hPa: 3 dam; above that, 6 dam to 400 and then 9 or 12 dam from 400 and above.¶

if (p_level >= 700):

incZ = 3

elif (p_level >= 400):

incZ = 6

elif (p_level >= 150):

incZ = 9

else:

incZ = 12zContours = np.arange(0,3000,incZ)We will plot wind barbs in knots, so convert U and V to knots.¶

Ukts = Up.metpy.convert_units('kts')

Vkts = Vp.metpy.convert_units('kts')Now, let’s prepare to make a nice-looking map.¶

Desired features in order of plotting (use zorder to ensure you get the order you want):¶

Contour fills of isotachs

Contour lines of divergence (positive and negative values, contrasting colors and styles for + and -)

Contour lines of geopotential heights

Wind barbs in knots

lats = Up.latitude

lons = Up.longitudeconstrainLat, constrainLon = (0.7, 6.5)for time in dateRange:

print(f'Processing {time}')

# Create the time strings, for the figure title as well as for the file name.

timeStr = dt.strftime(time,format="%Y-%m-%d %H%M UTC")

timeStrFile = dt.strftime(time,format="%Y%m%d%H")

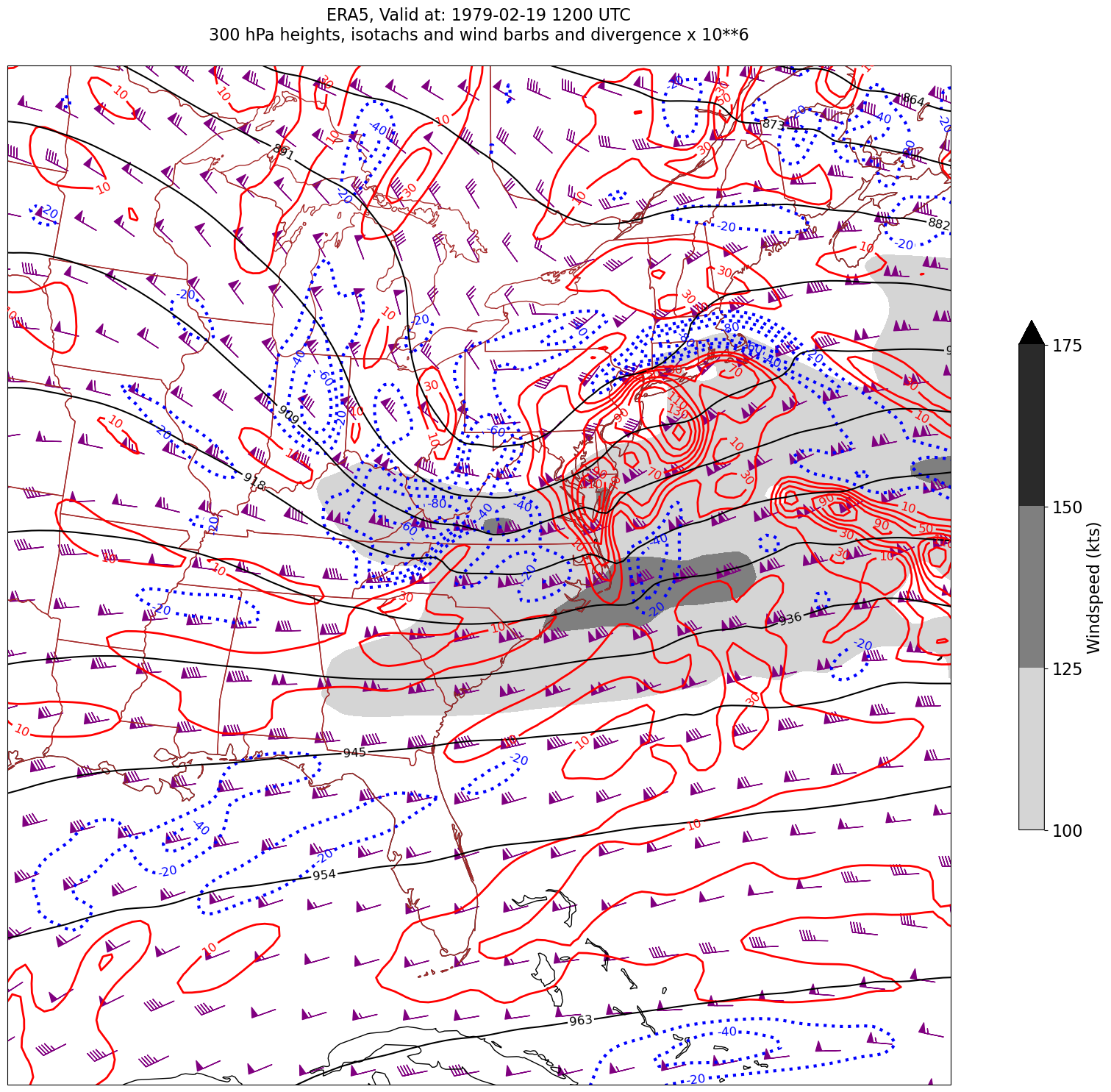

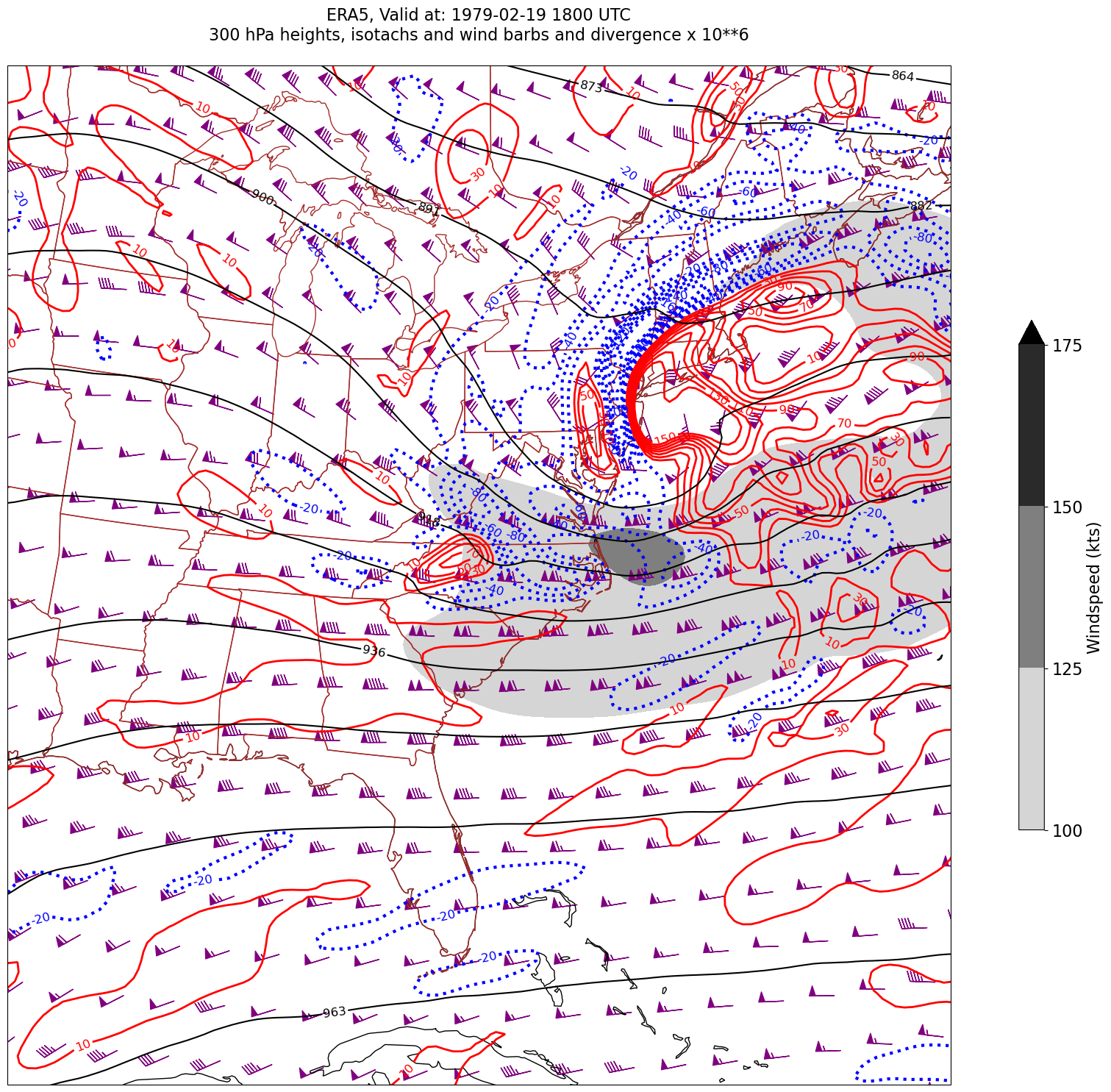

tl1 = f'ERA5, Valid at: {timeStr}'

tl2 = f'{p_level} hPa heights, isotachs and wind barbs and divergence x 10**6'

title_line = f'{tl1}\n{tl2}\n'

fig = plt.figure(figsize=(24,18)) # Increase size to adjust for the constrained lats/lons

ax = fig.add_subplot(1,1,1,projection=proj_map)

ax.set_extent ([lonW+constrainLon,lonE-constrainLon,latS+constrainLat,latN-constrainLat])

ax.add_feature(cfeature.COASTLINE.with_scale(res))

ax.add_feature(cfeature.STATES.with_scale(res),edgecolor='brown')

# Need to use Xarray's sel method here to specify the current time for any DataArray you are plotting.

# 1. Contour fills of isotachs

CF = ax.contourf(lons,lats,WSPDkts.sel(time=time),levels=wspdContours,transform=proj_data,cmap='gist_gray_r',extend='max')

cbar = plt.colorbar(CF,shrink=0.5)

cbar.ax.tick_params(labelsize=16)

cbar.ax.set_ylabel("Windspeed (kts)",fontsize=16)

# 2. Contour lines of divergence (first negative values, then positive)

cDivNeg = ax.contour(lons, lats, divSmooth.sel(time=time), levels=negDivContours, colors='blue',linestyles='dotted',linewidths=3, transform=proj_data)

ax.clabel(cDivNeg, inline=1, fontsize=12,fmt='%.0f')

cDivPos = ax.contour(lons, lats, divSmooth.sel(time=time), levels=posDivContours, colors='red',linewidths=2, transform=proj_data)

ax.clabel(cDivPos, inline=1, fontsize=12,fmt='%.0f')

# 3. Contour lines of geopotential heights

cZ = ax.contour(lons, lats, Zdam.sel(time=time), levels=zContours, colors='black', transform=proj_data)

ax.clabel(cZ, inline=1, fontsize=12, fmt='%.0f')

# 4. wind barbs

# Plotting wind barbs uses the ax.barbs method. Here, you can't pass in the DataArray directly; you can only pass in the array's values.

# Also need to sample (skip) a selected # of points to keep the plot readable.

# Remember to use Xarray's sel method here as well to specify the current time.

skip = 6

ax.barbs(lons[::skip],lats[::skip],Ukts.sel(time=time)[::skip,::skip].values, Vkts.sel(time=time)[::skip,::skip].values, color='purple',transform=proj_data)

title = ax.set_title(title_line,fontsize=16)

# Generate a string for the file name and save the graphic to your current directory.

fileName = f'{timeStrFile}_ERA5_{p_level}_WndDivHght'

fig.savefig(fileName)Processing 1979-02-19 12:00:00

Processing 1979-02-19 18:00:00

What’s next?¶

We will calculate a different diagnostic from another event.